How The Misinformation Ecosystem Threatens Global Stability

Kingsley Okon

Misinformation has become one of the defining challenges of the digital age. This is when false or inaccurate information is shared without the intent to deceive. Disinformation, on the other hand, is when false news is deliberately created and spread to mislead, manipulate, or cause harm. Both involve falsehoods, but the key difference lies in intent.

Honest mistakes occur when journalists, institutions, or individuals share incorrect information unknowingly and correct it once verified facts emerge. In contrast, misinformation and disinformation often persist even after being debunked, thriving across platforms and other online echo chambers where speed, emotion, and virality are rewarded more than accuracy. Understanding how this ecosystem works is crucial, because misinformation does not simply misinform, it shapes opinions, influences behaviour, and can destabilise societies.

Who Creates And Benefits From Misinformation

Political actors are among the most prominent drivers of misinformation. Around the world, false narratives are used to influence elections, discredit opponents, suppress voter turnout, or mobilize supporters through fear and anger. During election cycles, misleading claims about candidates, voting procedures, or electoral outcomes spread rapidly on social media platforms. These narratives often appeal to identity, ideology, or existing grievances, making them emotionally compelling and easily shareable.

Research by the Oxford Internet Institute has shown that organised political misinformation campaigns operate across the world, using bots, trolls, and coordinated networks to amplify misleading content. Across the 70 countries examined, the study found that misinformation campaigns benefit political actors by shaping public discourse, polarising societies, and weakening trust in democratic institutions. Even when false claims are later corrected, their initial impact often lingers, influencing perceptions long after the truth emerges.

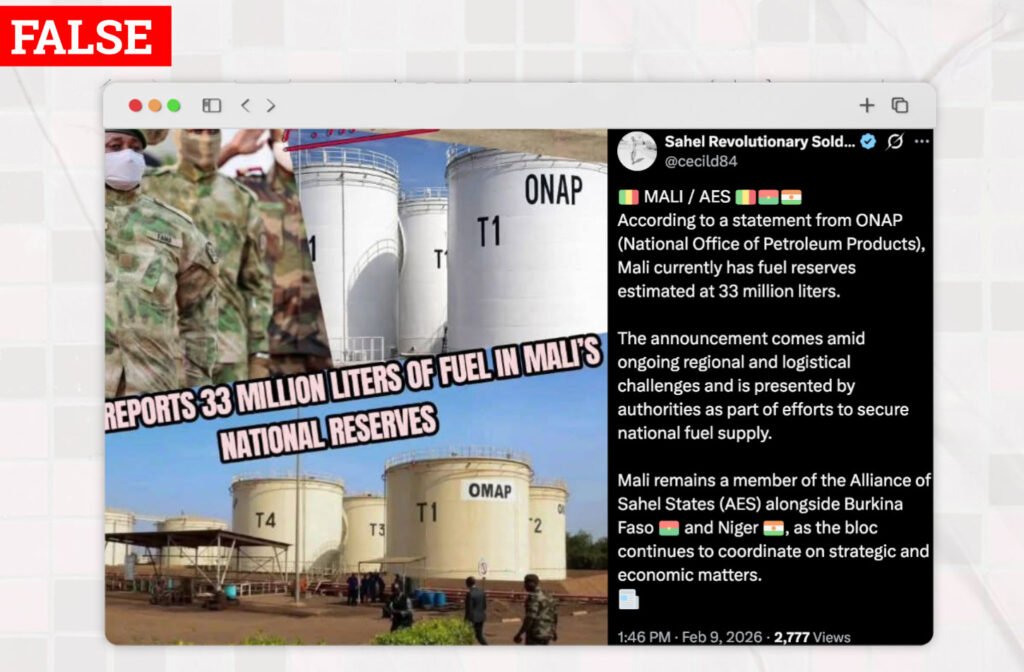

Beyond politics, misinformation is also fueled by profit and financial incentives. Clickbait websites and content farms publish sensational or false stories designed to attract attention, generate clicks, and earn advertising revenue. The more shocking or emotionally charged the headline, the more likely users are to click, share, and engage. This attention-driven economy rewards misinformation because accuracy often takes time, while falsehoods can be produced quickly and cheaply.

Social media monetisation further accelerates this problem. Influencers, page owners, and content creators may knowingly or unknowingly share misleading information to grow followers, boost engagement, or qualify for monetisation programs. Studies by researchers at MIT have found that false news spreads faster and reaches more people online than true news, largely because it is more novel and emotionally engaging. In this system, misinformation becomes a business model and financial gain makes it an irresistible aphrodisiac for online sleuths.

What Are The Real World Consequences?

The effects of misinformation are not limited to online spaces. In the real world, false claims have triggered public panic, protests, and even violence. Rumours spread through social media or messaging apps have led to riots, mob attacks, and communal tensions in different parts of the world. Once fear takes hold, corrections often fail to reach the same audience or achieve the same emotional impact as the original falsehood.

Health misinformation is another area with serious consequences. False claims about vaccines, treatments, or disease origins have undermined public health efforts, particularly during global health crises. During the COVID-19 pandemic, misinformation about vaccines, unproven cures, and conspiracy theories contributed to vaccine hesitancy and mistrust in health authorities. The World Health Organisation, WHO described this phenomenon as an “infodemic”, warning that misinformation can cost lives by influencing people to reject evidence-based medical advice.

Misinformation can also cause financial damage. False reports about companies, financial institutions, or government policies have triggered market fluctuations, stock sell-offs, and consumer panic. In some cases, a single misleading post can wipe billions off a company’s market value before being corrected. These economic impacts highlight how misinformation can distort decision making at both individual and institutional levels.

How Do We Fight Misinformation?

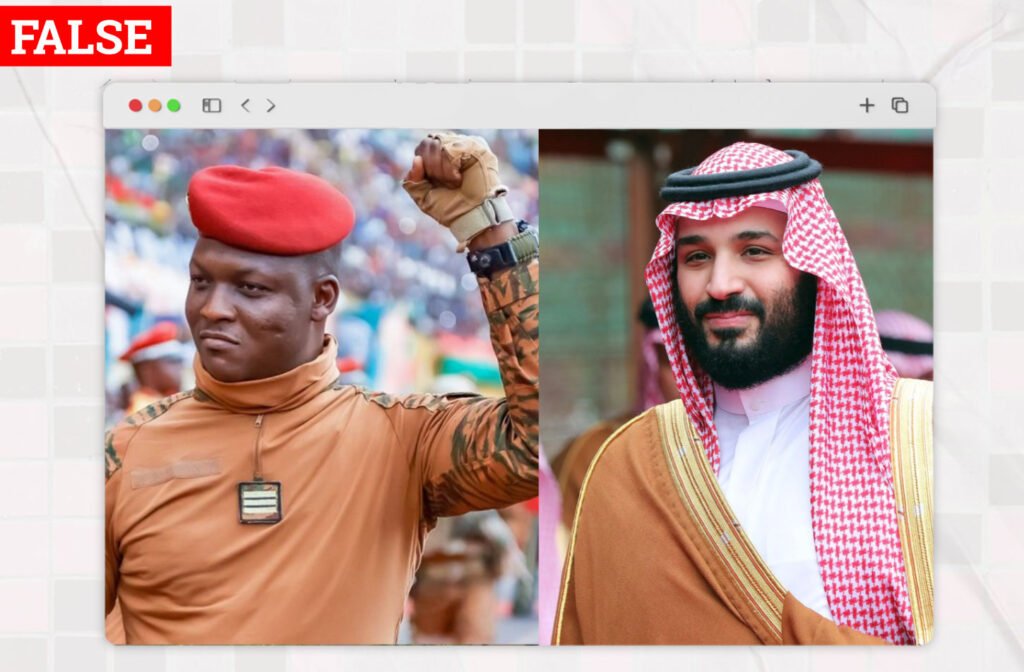

One of the most effective tools against misinformation is professional fact-checking. Independent fact-checking organisations use transparent methodologies to verify claims, assess evidence, and publish corrections. Platforms such as Africa Check, Dubawa, News Verifier Africa and FactCheck.org play a critical role in countering false narratives, especially during elections and public health crises.

Digital tools like Google Reverse Image Search, TinEye, and InVID enable journalists and researchers to trace the origins and spread of misleading content. However, fact-checking alone is not enough if corrections do not reach the same audiences as the original falsehoods.

This brings us to platform responsibility. Social media companies sit at the center of the misinformation ecosystem. Their algorithms prioritise engagement, often amplifying sensational content regardless of accuracy. In response to criticism, platforms have introduced measures such as content moderation, warning labels, reduced distribution of false posts, and partnerships with fact-checkers.

In the early years of social media, many platforms positioned themselves as neutral intermediaries rather than publishers, arguing they should not be held accountable for user generated content. In the intervening years, Meta pledged to strengthen its editorial and content moderation processes ahead of Australia’s upcoming election, amid growing concerns over AI generated deepfakes and coordinated misinformation campaigns. The move followed mounting regulatory scrutiny and public pressure on major technology platforms to safeguard electoral integrity, particularly after controversies in previous elections worldwide.

Governments have also stepped into the fight against misinformation through laws, regulations, and public awareness campaigns. Some countries have introduced legislation targeting coordinated disinformation campaigns, foreign interference, or harmful false content. Others focus on media literacy initiatives, teaching citizens how to critically evaluate information and recognise manipulation.

Conclusion

Misinformation spreads faster than facts because it is designed to exploit human psychology, emotional reactions, and digital algorithms that reward speed and engagement. In an attention economy, falsehoods often outcompete careful, evidence-based reporting. Combating this challenge requires a collective effort.

Individuals must pause before sharing, verify sources, and question emotionally charged content. Media organisations must uphold rigorous standards of verification and correction. Platforms must redesign systems that amplify harm, while governments must pursue balanced policies that protect both public safety and freedom of expression.

This is essential for national politics and global stability. In an interconnected world, misinformation can distort elections, inflame ethnic and religious tensions, undermine public health responses, and strain diplomatic relations between states. When falsehoods spread faster than facts, trust in institutions erodes, cooperation weakens, and collective problem solving becomes harder. The fight against misinformation is therefore not simply about correcting inaccurate claims; it is about safeguarding democratic governance, social cohesion, and humanity’s ability to confront shared global challenges.